There is a concept in rocketry called the tyranny of the rocket equation. NASA astronaut Don Pettit uses it to describe a ruthless constraint: every kilogram of unnecessary mass you add to a rocket demands exponentially more propellant to carry it. The tyranny isn’t a design failure. It’s physics. And the lesson isn’t really about rockets — it’s about what happens when teams don’t deeply understand the constraints they’re operating inside before they start building.

That understanding is what separates teams that iterate toward the right answer from teams that get faster and faster at building the wrong thing.

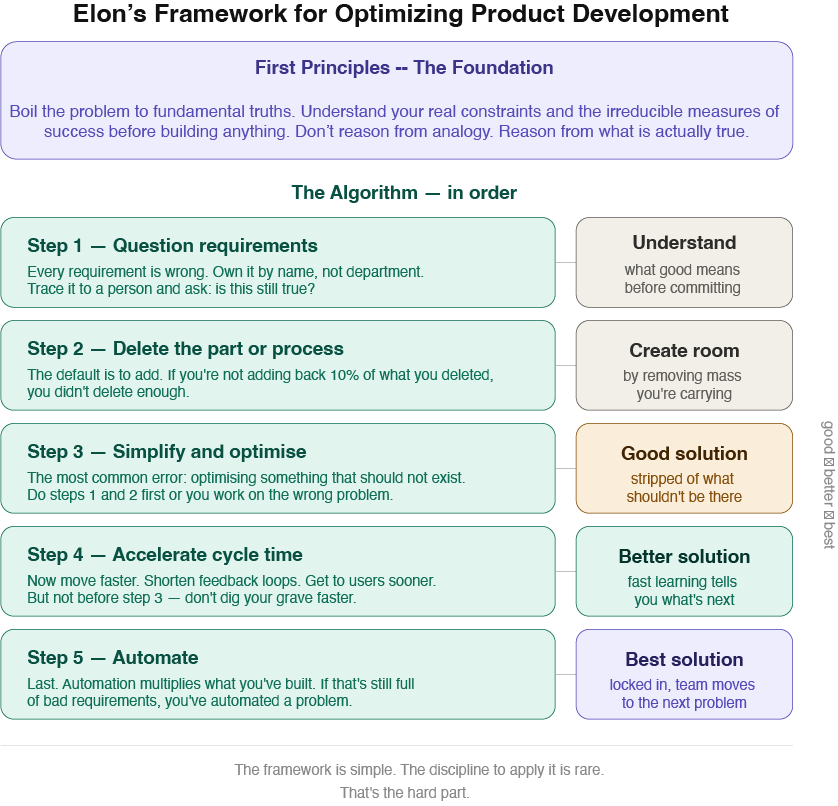

Elon Musk has two mental models worth understanding together. The first is first principles thinking — the foundation. The second is a 5-step engineering process he calls “the algorithm.” Neither works without the other.

First, Understand Your Constraints

Before any of the process matters, a team has to know what it’s actually trying to accomplish and what it’s up against. First principles thinking starts there.

Most teams reason by analogy. We look at what the previous version of the product did, what competitors are shipping, what worked last quarter, and we iterate from there. The problem isn’t the iteration — it’s that the starting point was never examined. We inherit constraints we never questioned and optimize inside boundaries we never chose.

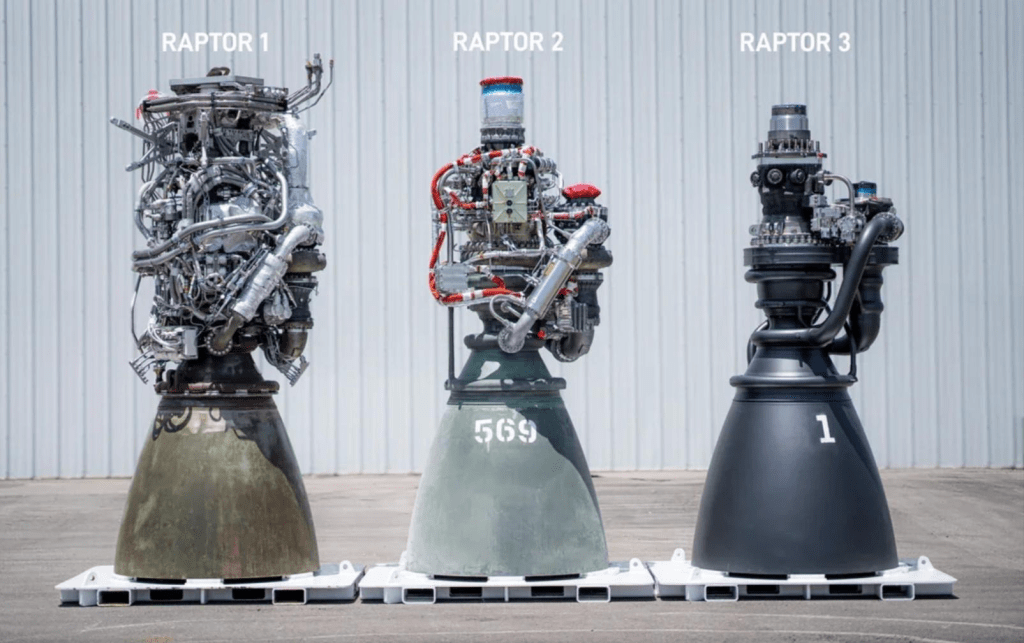

Musk’s framing is to boil a problem down to its most fundamental truths and reason up from there. When he wanted to build rockets, he found they cost $65 million. Rather than accept that as a constraint, he asked what a rocket is actually made of and what those materials cost. The gap between raw material cost and finished market price wasn’t physics — it was accumulated assumption. That gap became SpaceX.

For product teams, the equivalent question is: what are the fundamental measures of success for this product, and what is the real relationship between them? Not the proxy metrics. Not the dashboard your team inherited. The actual truths underneath. What does the user need to accomplish? What does success look like at the level of physics — the irreducible thing you are trying to do?

If a team can’t answer that clearly, no amount of process will save them. They’ll just optimize inside the wrong constraints.

The Algorithm

Once you understand your constraints and your fundamental measures, the algorithm is how you work toward them. The steps have to happen in order — that’s the whole point.

Step 1: Make the requirements less dumb.

Every requirement is wrong. It doesn’t matter who gave it to you — in fact the smarter the person, the more dangerous their requirement, because you’re less likely to push back. Musk’s rule: every requirement must be owned by a name, not a department. You can ask a person why something exists. You cannot ask a department.

Product teams accumulate requirements the same way rockets accumulate mass — incrementally, with good intentions, over time. The PRD inherits from the last PRD. Nobody re-examines the premise. The discipline of step 1 is to trace every requirement back to a person and ask them directly: is this still true?

Step 2: Delete the part or process.

The organizational default is to add. Add a step, add a check, add a field, add a ceremony. We add things “just in case,” and once something exists it tends to stay. Musk’s rule of thumb: if you’re not adding back at least 10% of what you deleted, you didn’t delete enough. The best part is no part.

This is where teams find the room to actually move. Every unnecessary step in a workflow, every feature nobody uses, every approval that exists because of a requirement nobody owns — that’s mass you’re carrying on every subsequent release. Delete aggressively, then see what you actually needed.

Step 3: Simplify and optimize.

This is the step most teams go to first. It’s also the most common mistake. Musk is direct: the most common error of a smart engineer is to optimize something that should not exist. Steps 1 and 2 exist precisely to prevent you from doing elegant, rigorous work on the wrong problem.

This is where the relationship between your fundamental measures matters. If you haven’t defined what good actually looks like — the real measure, not the proxy — you’ll optimize toward the wrong thing with great precision.

Step 4: Accelerate cycle time.

Now that you’re working on the right thing, shorten the feedback loop. Get to users sooner. Iterate faster. But Musk is explicit about the sequence: not before step 3. His line is worth keeping: if you’re digging your own grave, don’t dig it faster. Velocity without prior ruthless deletion just ships the wrong thing more efficiently.

Faster cycles only compound your learning if you’re learning the right things. Which brings us to the most underappreciated part of this framework.

Step 5: Automate.

Last. Musk admits he made this mistake himself on the Model 3 — he went backwards through all five steps, automating before deleting. Automation is a multiplier. If what you’re multiplying is still full of unexamined requirements and undeleted steps, you’ve automated a problem. Make sure it’s the right thing first.

From Good to Better to Best

The real value of this framework isn’t efficiency — it’s the shape of the learning it produces.

Most product teams think about progress as shipping features. The better mental model is moving from a good solution to a better solution to the best solution — and understanding clearly where you are in that progression at any given moment.

Step 1 and step 2 force you to confront what good actually means before you commit resources. Step 3 builds the good solution — stripped of everything that shouldn’t be there, optimized for what matters. Step 4 accelerates how fast you can learn whether your good solution is actually good, and what better looks like. Step 5 locks in what’s working so the team can direct its attention to the next problem.

This is what Pettit’s rocket equation is really pointing at. Every unnecessary thing you carry increases the cost of getting to the next stage — exponentially. The teams that get from good to better to best fastest are the ones who are most ruthless about what they’re carrying and most clear about where they’re trying to go.

The framework is simple. The discipline to apply it — especially when it means telling a smart person their requirement is wrong, or deleting something that took two sprints to build — is rare.

That’s the hard part. The framework is the easy part.